Do you actually know what happens to your conversations, your image, your surroundings after they’re captured by an AI-powered device?

If your answer is “not really,” you’re not alone. And this week, a series of revelations made it clear that the gap between what we think is happening with our data and what’s actually happening with it is wider than most of us realized.

A joint investigation by Swedish newspapers Svenska Dagbladet and Göteborgs-Posten dropped a bombshell: when users activate the AI assistant on Meta’s Ray-Ban smart glasses by saying “Hey Meta,” the footage isn’t just processed by algorithms. It’s routed to Sama, a data annotation company in Nairobi, Kenya, where human contractors review and label the video to train Meta’s AI.

What those workers are seeing is deeply concerning. Contractors described reviewing footage of people’s bank cards, explicit content, users in bathrooms, and people undressing without any knowledge they were being recorded.

That tension between convenience and control is where most of us are living right now.

One worker told investigators they don’t think the people in the footage know they’re being captured, because if they did, they wouldn’t be recording.

Meta says it blurs faces in footage sent for annotation. But former employees and current workers told investigators the system regularly fails, especially in difficult lighting conditions. Workers also noted their personal phones are banned inside the office, likely to prevent footage from leaking.

As of this week, Meta is now facing a class action lawsuit filed March 4 in federal court in San Francisco. The plaintiffs, who purchased the glasses based on marketing that called them “designed for privacy,” allege Meta failed to disclose the role of human reviewers in its AI training pipeline.

The complaint, per Engadget, argues the undisclosed human review process transforms the product from a personal device into what it calls a “surveillance conduit.”

Meta’s defense has been consistent: the data is filtered, the practice is disclosed in their privacy policy, and it’s in line with industry standards. But here’s the thing. The privacy policy governs the wearer’s agreement with Meta. If someone wearing these glasses walks into your home, your doctor’s office, or your bedroom, you never signed anything. You’re the person being annotated.

For European users, this runs headfirst into GDPR, which requires consent from every data subject, including bystanders. Kenya, where the footage lands, has no EU adequacy decision, raising serious questions about the legality of cross-border data transfers.

This Isn’t an Isolated Incident

The privacy risks around wearable AI have been flagged before. In October 2024, two Harvard students, AnhPhu Nguyen and Caine Ardayfio, built a project called I-XRAY that paired Meta’s Ray-Ban glasses with facial recognition software and public databases. They were able to identify strangers on campus in under a minute, pulling up names, home addresses, and phone numbers just by looking at someone. They never released the code. They built it as a proof of concept to show how easy it could be to exploit everyday wearable tech, and the demonstration went viral.

Meta has sold an estimated 7 million Ray-Ban AI glasses in 2025 alone, tripling 2023 and 2024 combined. Transparently, I have worked with Meta before, have been gifted a pair too. The glasses look like ordinary sunglasses, which is precisely what makes them different from Google Glass, which died partly because wearers were visually identifiable. Meta solved the design problem but the privacy problem never really got solved it seems. It got hidden.

The Workplace Is Having the Same Conversation

This isn’t just about wearables. The same privacy tension is playing out in offices and virtual meetings right now.

AI transcription tools like Otter.ai, which integrates with Zoom, Google Meet, and Microsoft Teams, have exploded in workplace adoption. But in August 2025, Otter was hit with a federal class action lawsuit alleging it secretly recorded private conversations and used them to train its machine learning models without proper consent from all participants. The plaintiff wasn’t even an Otter user.

His conversations were captured simply because someone else in the meeting was running the tool.

Users have shared stories on social media about Otter’s automated tools backfiring: recording meetings with investors and then sharing transcripts containing confidential deal details, or continuing to capture audio after attendees had left a call. As the IAPP noted, organizations are now grappling with what it calls “the second wave of AI governance”: managing not just what goes into AI tools, but what those tools capture from us.

Over a dozen U.S. states require all-party consent before a conversation can be recorded, which you might have noticed as that pop up that now comes up for your to consent when someone starts recording a meeting. However, smart glasses and AI transcription tools create scenarios where bystanders, coworkers, or meeting attendees may be recorded or have their conversations documented without ever knowing it. As workplace privacy lawyers have pointed out, unlike traditional recording that often requires deliberate action, AI glasses and transcription bots can passively capture and transcribe conversations throughout the day, creating permanent, searchable records of discussions that participants never knew were being documented.

Why This Matters for Creators and Entrepreneurs

If you're building a brand, producing content, or running a business, this is directly relevant to you. Your meetings are being transcribed, your face is in datasets and your voice could be training an AI model you've never heard of. And the terms of service you clicked through two years ago may have given a company permission to do all of it. Plus, let’s be honest, most of us don’t even read all of it, but that’s a whole other story. But this cuts both ways. The Ray-Ban Meta glasses became a hit with creators for good reason. Hands-free POV content, vlogging without a visible camera, capturing moments on the go.

But now there are real questions creators need to ask themselves before they hit record. Are you capturing bystanders who never consented? Are you filming in spaces where people have a reasonable expectation of privacy? Is the footage you're shooting being sent to third-party reviewers you didn't know existed? The same device that makes content creation seamless also might make passive surveillance seamless, and the line between the two is thinner than most creators realize. Whether you're the one wearing the glasses or the one standing next to someone who is, there are a lot more factors to consider now than just the shot.

A 2025 Cisco benchmark study found that 64% of respondents worry about inadvertently sharing sensitive information with generative AI tools, yet nearly half admit to inputting personal or non-public data. That tension between convenience and control is where most of us are living right now.

The EU AI Act, which becomes fully applicable in August 2026, will establish new requirements around data protection impact assessments, audit trails, and human oversight for high-risk AI systems. In the U.S., 18 state privacy laws are now active, and enforcement is accelerating. Colorado’s Algorithmic Accountability Law, effective February 2026, grants consumers rights to notice, explanation, and appeal when AI systems make decisions about them.

For creators specifically, this means being intentional about the tools you use and understanding what you’re giving up in exchange for convenience. Read the privacy policies of your transcription tools. Ask whether your data is being used to train models. Opt out where you can. And push for better defaults from the platforms you depend on.

We’re in a moment where the technology has outpaced the rules, and the companies building these products know it. Meta marketed its glasses as “designed for privacy” while reportedly routing intimate footage to human reviewers in Kenya. Otter.ai positioned itself as a productivity tool while using private conversations to train its AI. The pattern is consistent: ship fast, bury the disclosure, and let the lawsuits catch up later.

I don’t think the answer is to stop using technology. But I do think we need to be much more honest about the trade-offs we’re making. Every time you say “Hey Meta” or let an AI bot join your Zoom call, you’re making a decision about your data, and potentially about the data of everyone around you.

My company, What’s Trending, previously covered this story here.

Find me at SXSW!

: I'm heading to EU House at SXSW for a fireside chat with EU Deputy Ambassador Ruth Bajada on how tech is reshaping work, life and mental health for creators. Join us Friday, March 13 at Speakeasy in Austin from 5:30 - 6:30 p.m. CT. Free to attend. 🔗 RSVP

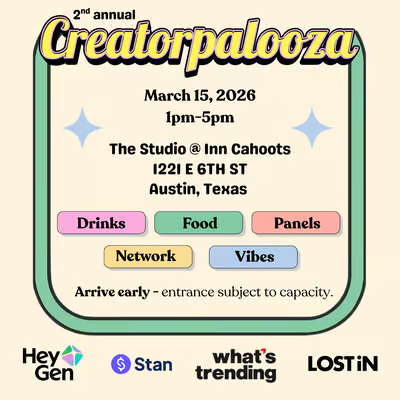

Creatorpalooza is back! We're bringing panels on brand deals, AI avatars, mental health and podcast growth to SXSW on Sunday, March 15 from 1 - 5 p.m. CT at The Studio at Inn Cahoots. Free event, co-presented by HeyGen, Stan, LOSTiN and ATX Creator Meetups. Registration required.

We're launching virtual group therapy for creators in California through Creators 4 Mental Health x Revive Health Therapy. Sliding-scale, designed for the unique pressures of creator life. More details coming soon. 🔗Creators4mentalhealth.com/grouptherapy

Other headlines to check out:

AI

Creator Economy

Web3

Friendly Reminder

Most work is best approached with patience and focus on the long game, even when it feels timely.

Remember, I'm Bullish on you! With gratitude,